AI detectors work by providing analysis of a text to decide if the text was written by AI or by a human.

They generally use AI to analyse the language in the text. AI detectors also use a dataset (a group of texts that are known to be AI or Human-generated) to compare the texts.

What is AI Detection?

AI detection is a way to figure out if a text was written by a person or by a computer program.

It uses algorithms that have learned from lots of examples of writing from both humans and computers.

These tools look at the writing and then predict if a computer wrote it, giving a score on how sure they are about it.

AI detection involves using trained classifiers (basically a way of separating text into categories) to distinguish between human and AI-generated text.

Why Do We Need AI Detectors?

We need AI Detectors because people now have the ability to use an AI tool to create text like online articles, essays, and other important documentation.

This is important in so many situations.

Can you imagine a lawyer presenting a case file written by ChatGPT and not checked for factual accuracy? (This has actually already happened)

We also have many students using AI tools to do their homework for them. This wastes so much time for teachers who have to correct work that ChatGPT did.

Are you starting to see the importance of AI Detectors now?

How Do AI Detectors Work?

Many AI Detectors are not very transparent about how their AI Detection tool works.

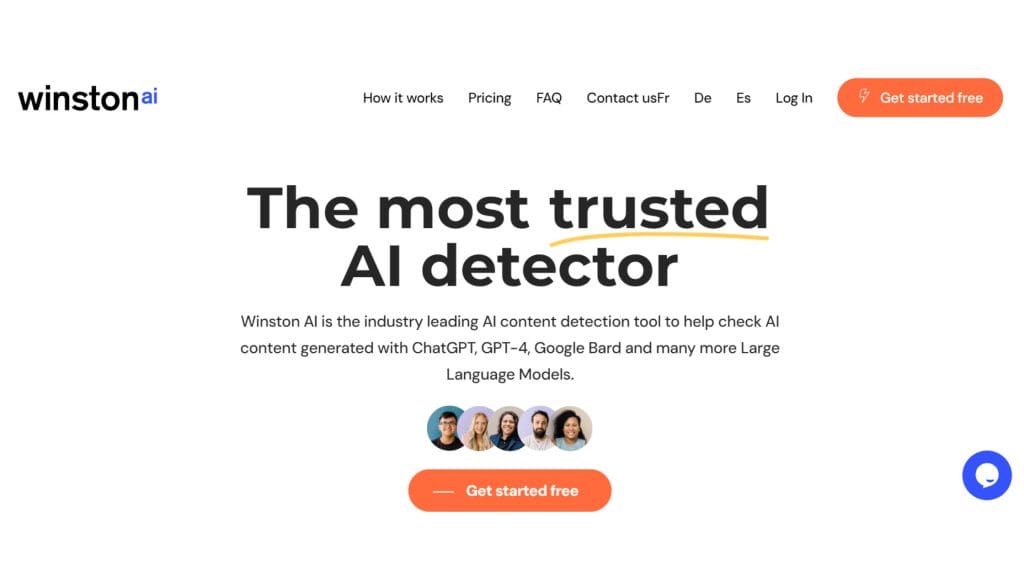

We at Winston AI pride ourselves on being open about how our AI detection works.

AI Detectors usually work by combining two tests:

- Language analysis

In the language analysis comparison test to check for patterns of language that AI tools are known for. Some examples are when AI text repeats text, writers very generic text (nothing interesting) and sometimes completely invents false information.

- Comparison to dataset

We have a dataset of over 10,000 texts. This allows us to compare new texts to texts that we know are either AI texts or Human-written texts.

If you want access to our dataset or simply to learn more about our accuracy rates, you can read more here.

As you can see, AI detectors are only as good as their algorithms and that is why many of the free AI detectors you can find on Google simply don’t work as they don’t take as much care as we do.

Are AI Content Detectors Accurate?

Not all AI content detectors are accurate and this is a problem in the industry. As mentioned above, other tools have been launched without having the best technology or dataset.

Our tool (Winston AI) has an accuracy rate of 99.98% when detecting AI content.

Honestly, I have tried it out hundreds of times and it has never been wrong.

I have also tried other detectors and they often fail miserably.

That is why you need to be careful.

There have been significant problems with people using below-par AI Detectors and issues arrive such as students being falsely accused of cheating by universities.

This independent testing of many AI Detectors found Winston AI to be the most accurate on the market.

Can you Bypass an AI Detector?

It is possible to bypass an AI detector depending on the AI detector that you are using.

Many tools only work with some AI tools and AI detectors need to keep pace with the rapid updates of AI tools.

To have a great AI Detector tool, you need to keep up with all of the models like GPT4, Google Gemini and Claude.

There also exist tools that call themselves “humanizers”. These tools aim to change the patterns of text in an attempt to bypass AI detectors.

None of these “undetectable” tools has worked in my testing but then again I have been using the gold standard in AI detection Winston AI.

Who Needs AI a Good AI Detector?

The following parties should invest in a robust AI content detector like Winston AI.

Teachers/Schools/Universities

Teachers need AI detection tools to maintain academic integrity. If you don’t know whether a text was written by AI or by a student, then what is the point in giving assignments?

Education must change and students and teachers must keep up with the latest technological advances and engage with AI.

Government Offices

Government offices should use AI Detection tools to check if important documentation was created with AI.

AI can be a big help in creating texts but should not be a replacement for official documentation by government officials.

Employers

Employers generally don’t like their employees using AI to complete their work. Using AI can often lead to below-par work.

Employees need to be trained to use AI to augment their work. Take, for example, writing an email with AI. The first draft that ChatGPT creates will often be too long and lack that personal touch. With training, employees can learn how to prompt ChatGPT better and edit the AI text to provide an excellent email.

Police Departments

Fraud and Forgery are crimes across the world and AI can be used to impersonate people.

We already have technology such as voice cloning where technology mimics the sound of someone’s voice.

There is a need for an AI detector for voice, videos and images as well as text.

Blogging/Social Media Platforms

Platforms should as Medium thrive on human-written content and stories. AI written text is already at the level that most people can’t tell the difference.

This may prove a problem for Social Media and Blogging platforms in the future if they are receiving mass-produced content and they find it difficult to find the best content.

Publishers/News Outlets

Publishers need AI detectors to know if their writers used AI to write their stories and articles. We have already seen several cases of websites using AI and producing poor-quality content.

The problem with AI is that it hallucinates sometimes. This means that it guesses facts when it is unable to find the right information. The AI also presents these incorrect facts in a highly convincing way leaving it very difficult for us to know what is true and what is false.

What’s the difference between Plagiarism and AI Detection?

Plagiarism is when you copy text directly from another source. It is important to know that using AI is not generally considered plagiarism as AI is not directly copying work but rather using other sources as input.

You can read more about if ChatGPT is Plagiarism here.

Final Thoughts

As you can see the technology behind AI detection tools can be a little bit difficult to understand for people who are not experts in AI.

The process of testing for AI content is actually very straightforward and I urge you to give a Winston AI a test for yourself.